feat(copilot): standard/advanced model toggle with Opus rate-limit multiplier#12786

Conversation

Adds `Flag.COPILOT_MODEL` (string flag) and a `_resolve_user_model_override` helper that fetches the flag value per-request and overrides the SDK model for that user's turn. - `feature_flag.py`: add `Flag.COPILOT_MODEL = "copilot-model"` and `_env_flag_override_string` for local dev/test string overrides - `service.py`: after `_resolve_sdk_model()`, await user override and replace `sdk_model` if LD returns a non-empty string for the user - Tests: 12 new tests for `_env_flag_override_string` and `_resolve_user_model_override` (all passing) Local dev: set `FORCE_FLAG_COPILOT_MODEL=anthropic/claude-opus-4-6` (or `NEXT_PUBLIC_FORCE_FLAG_COPILOT_MODEL`) to route all users to Opus without touching LaunchDarkly.

… user_id to log, add LD flag key test

Self-review fixes:

- `env_flag_string_override`: remove leading underscore — function is part

of the public API surface (used across modules), private prefix was wrong

- `_resolve_user_model_override`: include truncated user_id in the override

log line for easier correlation in prod logs

- Add test asserting get_feature_flag_value is called with the correct flag

key ("copilot-model") and user_id

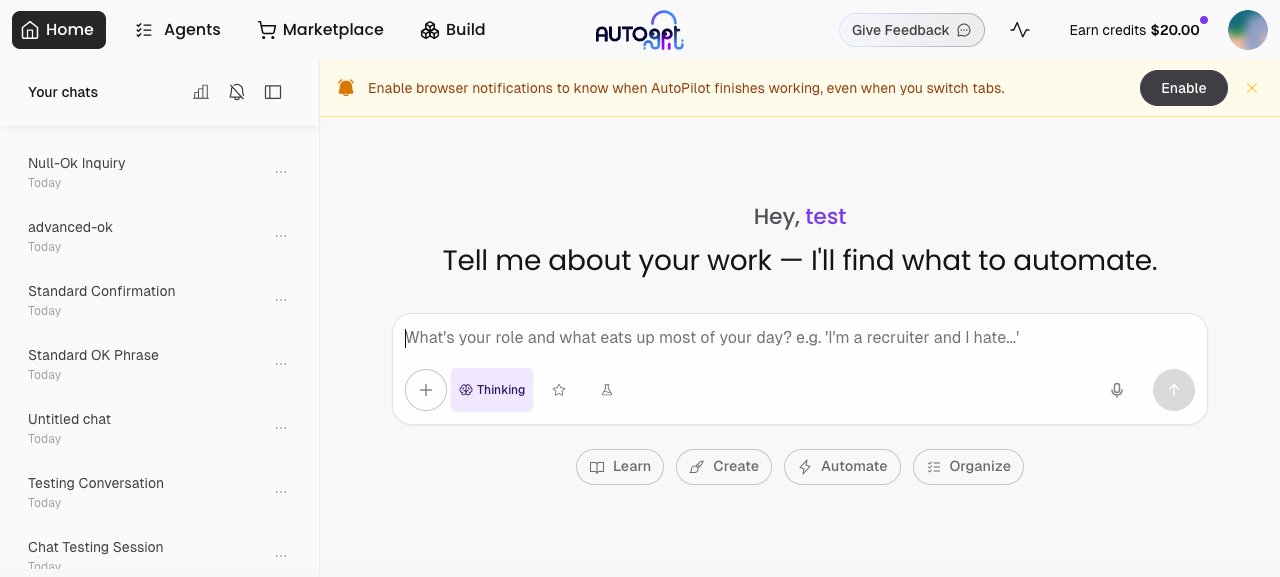

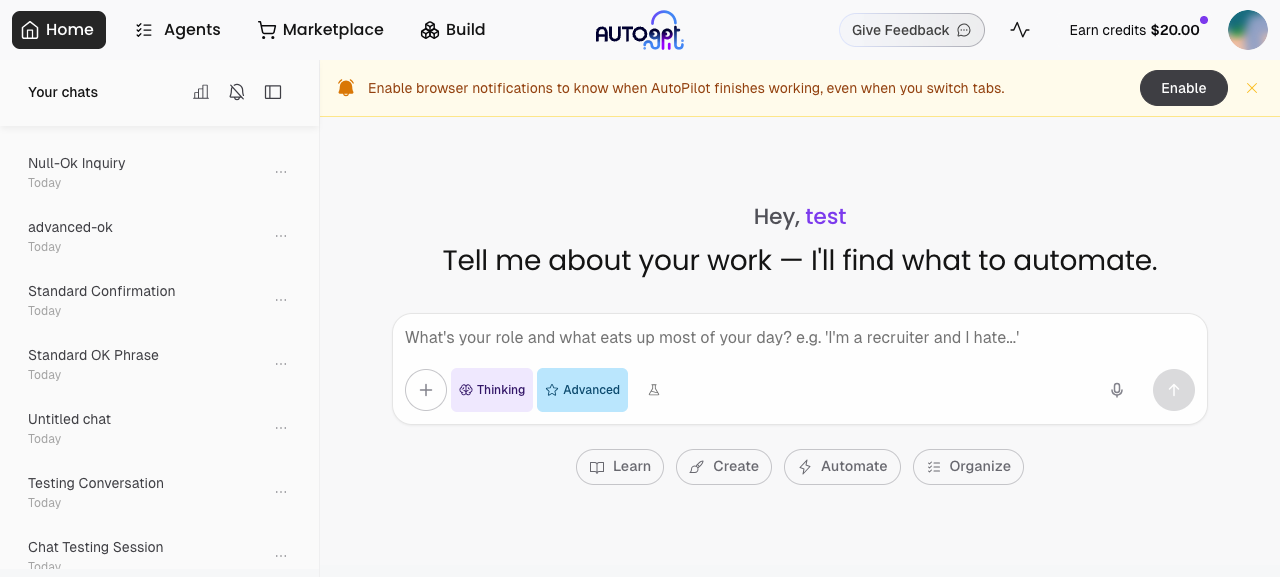

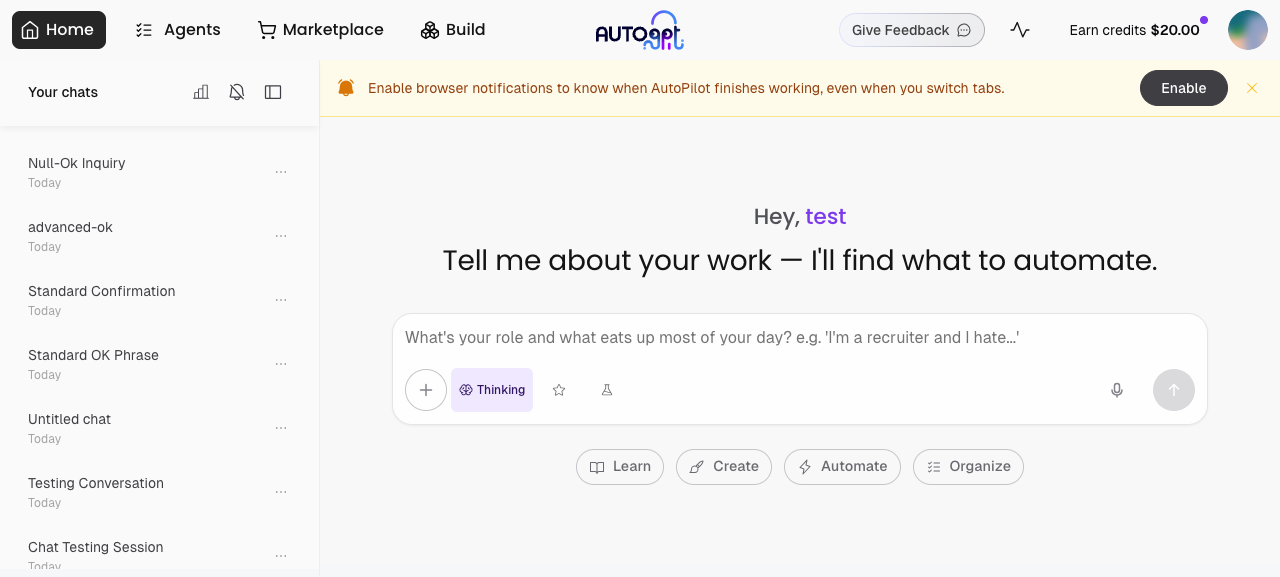

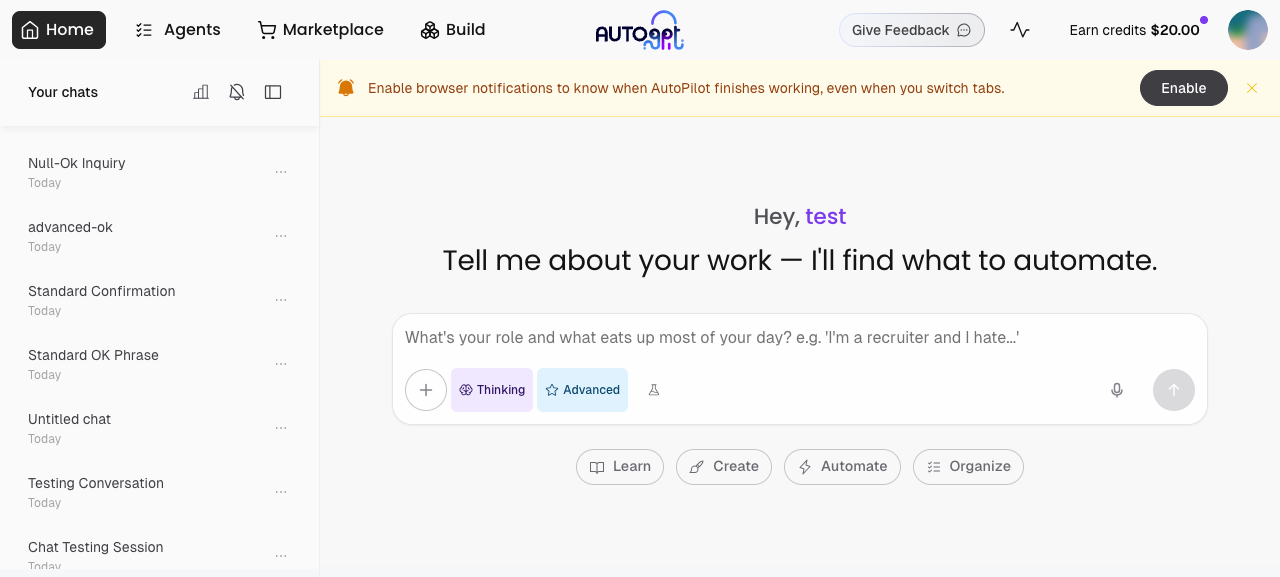

…ltiplier Add a per-request model tier toggle to CoPilot. Users can switch between Standard (Sonnet) and Advanced (Opus) from the chat input toolbar. Opus turns consume rate-limit quota 5× faster (matching Anthropic pricing), so no separate entitlement gate is needed — usage self-limits via the budget. - Add CopilotLlmModel = Literal["standard", "advanced"] type - ModelToggleButton (sky-blue, star icon, label only when active) - localStorage persistence via Key.COPILOT_MODEL - Backend: model tier passed in request body, resolved to actual model name - Rate-limit multiplier 5.0 for Opus in record_token_usage (Redis only, does not affect PlatformCostLog or cost_usd — those use real API values) - Reduce claude_agent_max_budget_usd default from $15 to $10

|

Note Reviews pausedIt looks like this branch is under active development. To avoid overwhelming you with review comments due to an influx of new commits, CodeRabbit has automatically paused this review. You can configure this behavior by changing the Use the following commands to manage reviews:

Use the checkboxes below for quick actions:

WalkthroughAdds an optional per-request Copilot LLM model-tier ("standard" | "advanced") end-to-end: frontend toggle and persisted store state, API/schema change, server-side resolution (env > LD > request > config), threading model into enqueue/processor/stream, and applying a model_cost_multiplier into usage persistence and rate limiting. Changes

Sequence Diagram(s)sequenceDiagram

participant User as Frontend/User

participant UI as ChatInput UI

participant Store as Copilot UI Store

participant API as Backend API

participant SDK as Copilot SDK/Service

participant LD as LaunchDarkly

participant Exec as Executor

participant Tracker as Token Tracker

User->>UI: toggle model

UI->>Store: setCopilotLlmModel("advanced"|"standard")

Store->>Store: persist Key.COPILOT_MODEL

UI->>API: POST /stream-chat (model=<selected>|null)

API->>SDK: stream_chat_completion_sdk(..., model=request.model)

SDK->>LD: get_feature_flag_value(user_id, "copilot-model")

LD-->>SDK: flag value or None

SDK->>SDK: resolve final sdk_model and model_cost_multiplier (env > LD > request > config)

SDK->>Exec: enqueue_copilot_turn(..., model=resolved_model)

Exec->>Tracker: persist_and_record_usage(..., model_cost_multiplier)

Tracker->>Tracker: apply multiplier (clamp ≥1.0), round, update counters

Estimated code review effort🎯 4 (Complex) | ⏱️ ~45 minutes Possibly related PRs

Suggested reviewers

🚥 Pre-merge checks | ✅ 2 | ❌ 1❌ Failed checks (1 warning)

✅ Passed checks (2 passed)

✏️ Tip: You can configure your own custom pre-merge checks in the settings. ✨ Finishing Touches🧪 Generate unit tests (beta)

Thanks for using CodeRabbit! It's free for OSS, and your support helps us grow. If you like it, consider giving us a shout-out. Comment |

🔍 PR Overlap DetectionThis check compares your PR against all other open PRs targeting the same branch to detect potential merge conflicts early. 🔴 Merge Conflicts DetectedThe following PRs have been tested and will have merge conflicts if merged after this PR. Consider coordinating with the authors.

🟡 Medium Risk — Some Line OverlapThese PRs have some overlapping changes:

🟢 Low Risk — File Overlap OnlyThese PRs touch the same files but different sections (click to expand)

Summary: 6 conflict(s), 1 medium risk, 10 low risk (out of 17 PRs with file overlap) Auto-generated on push. Ignores: |

Codecov Report❌ Patch coverage is Additional details and impacted files@@ Coverage Diff @@

## dev #12786 +/- ##

==========================================

- Coverage 64.64% 64.63% -0.01%

==========================================

Files 1819 1820 +1

Lines 133985 134044 +59

Branches 14428 14433 +5

==========================================

+ Hits 86615 86644 +29

- Misses 44671 44696 +25

- Partials 2699 2704 +5

Flags with carried forward coverage won't be shown. Click here to find out more.

🚀 New features to boost your workflow:

|

…el name - Rename store state: copilotMode → copilotChatMode, copilotModel → copilotLlmModel (matching the CopilotMode/CopilotLlmModel type names for consistency) - Inline model_cost_multiplier into the advanced/standard/else branches (cleaner — no separate computation block after the if/else) - Fix model name in PlatformCostLog: pass sdk_model instead of config.model so cost dashboard shows "claude-opus-4-6" when Opus is actually used - Fix stale docstring: 'opus'/'sonnet' → 'advanced'/'standard'

…icant-Gravitas/AutoGPT into feat/copilot-model-targeting-flag

There was a problem hiding this comment.

🧹 Nitpick comments (1)

autogpt_platform/backend/backend/copilot/sdk/service.py (1)

2556-2563: Consider extracting the cost multiplier constant to module level.The multiplier logic is correct (5× aligns with Opus/Sonnet pricing ratio). However,

_OPUS_COST_MULTIPLIERis defined inside the function body, which is inconsistent with other module-level constants like_MAX_STREAM_ATTEMPTSand_EMPTY_TOOL_CALL_LIMIT.♻️ Suggested refactor

Move the constant to module level (e.g., near line 138 with other constants):

+# Cost multiplier for Opus model turns — Opus is ~5× more expensive than Sonnet +# ($15/$75 vs $3/$15 per M tokens). Applied to rate-limit counters so Opus +# turns deplete quota proportionally faster. +_OPUS_COST_MULTIPLIER = 5.0 + _EMPTY_TOOL_CALL_LIMIT = 5Then at line 2560:

- _OPUS_COST_MULTIPLIER = 5.0 model_cost_multiplier = ( _OPUS_COST_MULTIPLIER if sdk_model and "opus" in sdk_model else 1.0 )🤖 Prompt for AI Agents

Verify each finding against the current code and only fix it if needed. In `@autogpt_platform/backend/backend/copilot/sdk/service.py` around lines 2556 - 2563, Move the local constant _OPUS_COST_MULTIPLIER out of the function and declare it at module level alongside other constants like _MAX_STREAM_ATTEMPTS and _EMPTY_TOOL_CALL_LIMIT; then remove the in-function definition and keep the existing computation that sets model_cost_multiplier = (_OPUS_COST_MULTIPLIER if sdk_model and "opus" in sdk_model else 1.0) so behavior is unchanged. Ensure the module-level _OPUS_COST_MULTIPLIER is named exactly the same and referenced by the model_cost_multiplier expression (which uses sdk_model) so no other callers need changes.

🤖 Prompt for all review comments with AI agents

Verify each finding against the current code and only fix it if needed.

Nitpick comments:

In `@autogpt_platform/backend/backend/copilot/sdk/service.py`:

- Around line 2556-2563: Move the local constant _OPUS_COST_MULTIPLIER out of

the function and declare it at module level alongside other constants like

_MAX_STREAM_ATTEMPTS and _EMPTY_TOOL_CALL_LIMIT; then remove the in-function

definition and keep the existing computation that sets model_cost_multiplier =

(_OPUS_COST_MULTIPLIER if sdk_model and "opus" in sdk_model else 1.0) so

behavior is unchanged. Ensure the module-level _OPUS_COST_MULTIPLIER is named

exactly the same and referenced by the model_cost_multiplier expression (which

uses sdk_model) so no other callers need changes.

ℹ️ Review info

⚙️ Run configuration

Configuration used: Organization UI

Review profile: CHILL

Plan: Pro

Run ID: c2ff2fd1-eb6b-4e89-a5cf-f16ac91ea6e9

📒 Files selected for processing (18)

autogpt_platform/backend/backend/api/features/chat/routes.pyautogpt_platform/backend/backend/copilot/config.pyautogpt_platform/backend/backend/copilot/executor/processor.pyautogpt_platform/backend/backend/copilot/executor/utils.pyautogpt_platform/backend/backend/copilot/rate_limit.pyautogpt_platform/backend/backend/copilot/sdk/service.pyautogpt_platform/backend/backend/copilot/sdk/service_helpers_test.pyautogpt_platform/backend/backend/copilot/token_tracking.pyautogpt_platform/backend/backend/copilot/token_tracking_test.pyautogpt_platform/backend/backend/util/feature_flag.pyautogpt_platform/backend/backend/util/feature_flag_test.pyautogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/ChatInput.tsxautogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/components/ModelToggleButton.tsxautogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/components/__tests__/ModelToggleButton.test.tsxautogpt_platform/frontend/src/app/(platform)/copilot/store.tsautogpt_platform/frontend/src/app/(platform)/copilot/useCopilotPage.tsautogpt_platform/frontend/src/app/(platform)/copilot/useCopilotStream.tsautogpt_platform/frontend/src/services/storage/local-storage.ts

There was a problem hiding this comment.

Actionable comments posted: 2

🧹 Nitpick comments (2)

autogpt_platform/frontend/src/app/(platform)/copilot/__tests__/store.test.ts (2)

183-203: ExtendclearCopilotLocalDatatest to verify model-tier reset.Line 193 checks

copilotChatModereset, but this flow now also resetscopilotLlmModel. Adding this assertion will protect the new Standard/Advanced behavior from regressions.Proposed patch

it("resets state and clears localStorage keys", () => { useCopilotUIStore.getState().setCopilotChatMode("fast"); + useCopilotUIStore.getState().setCopilotLlmModel("advanced"); useCopilotUIStore.getState().setNotificationsEnabled(true); useCopilotUIStore.getState().toggleSound(); useCopilotUIStore.getState().addCompletedSession("s1"); useCopilotUIStore.getState().clearCopilotLocalData(); const state = useCopilotUIStore.getState(); expect(state.copilotChatMode).toBe("extended_thinking"); + expect(state.copilotLlmModel).toBe("standard"); expect(state.isNotificationsEnabled).toBe(false);🤖 Prompt for AI Agents

Verify each finding against the current code and only fix it if needed. In `@autogpt_platform/frontend/src/app/`(platform)/copilot/__tests__/store.test.ts around lines 183 - 203, The test for clearCopilotLocalData needs to assert that the copilotLlmModel is reset to its default (to protect Standard/Advanced regression); update the "clearCopilotLocalData" spec in store.test.ts to call useCopilotUIStore.getState().clearCopilotLocalData() (already done) and add an expectation on useCopilotUIStore.getState().copilotLlmModel (or the getter) to equal the default model value (e.g., "standard" or whatever the store uses) after the call, referencing the clearCopilotLocalData and copilotLlmModel symbols.

15-26: ResetcopilotLlmModelinbeforeEachto keep tests isolated.Line 17 currently resets only part of the store. Adding

copilotLlmModel: "standard"here will prevent state leakage if a future test mutates model tier.Proposed patch

beforeEach(() => { window.localStorage.clear(); useCopilotUIStore.setState({ initialPrompt: null, sessionToDelete: null, isDrawerOpen: false, completedSessionIDs: new Set<string>(), isNotificationsEnabled: false, isSoundEnabled: true, showNotificationDialog: false, copilotChatMode: "extended_thinking", + copilotLlmModel: "standard", }); });🤖 Prompt for AI Agents

Verify each finding against the current code and only fix it if needed. In `@autogpt_platform/frontend/src/app/`(platform)/copilot/__tests__/store.test.ts around lines 15 - 26, The beforeEach setup in the test does not reset the copilotLlmModel, which can cause cross-test state leakage; update the useCopilotUIStore.setState invocation inside the beforeEach to include copilotLlmModel: "standard" so the store is fully reset before each test (locate the beforeEach block and the useCopilotUIStore.setState call in store.test.ts and add the copilotLlmModel property to the state object).

🤖 Prompt for all review comments with AI agents

Verify each finding against the current code and only fix it if needed.

Inline comments:

In `@autogpt_platform/backend/backend/copilot/sdk/service.py`:

- Around line 2535-2560: The current per-request override sets

model_cost_multiplier based on the requested "model" value (e.g., in the branch

that handles model == "advanced" / "standard"), but that value is never

recomputed if sdk_model is later changed by the SDK fallback path; update the

logic so that after any fallback or final model resolution (i.e., anywhere

sdk_model may be reassigned after _normalize_model_name or fallback handling),

you recompute and persist both the effective model name and

model_cost_multiplier from the final sdk_model (use a check like "if sdk_model

and 'opus' in sdk_model then multiplier=5.0 else 1.0"), and ensure the persisted

"model" string reflects the final sdk_model rather than the original requested

override; modify the code paths that set sdk_model/model_cost_multiplier

(including the advanced/standard branches and the SDK fallback activation path)

to enforce this re-evaluation.

- Around line 3245-3247: The variables sdk_model and model_cost_multiplier must

be initialized before the try block to avoid UnboundLocalError when the early

return in the sdk_cwd exception handler skips their later assignments; add

default initializations (e.g., sdk_model: str | None = None and

model_cost_multiplier = 1.0) immediately before the try that contains the

sdk_cwd handling so the finally block (which uses sdk_model and

model_cost_multiplier) can always reference them safely.

---

Nitpick comments:

In

`@autogpt_platform/frontend/src/app/`(platform)/copilot/__tests__/store.test.ts:

- Around line 183-203: The test for clearCopilotLocalData needs to assert that

the copilotLlmModel is reset to its default (to protect Standard/Advanced

regression); update the "clearCopilotLocalData" spec in store.test.ts to call

useCopilotUIStore.getState().clearCopilotLocalData() (already done) and add an

expectation on useCopilotUIStore.getState().copilotLlmModel (or the getter) to

equal the default model value (e.g., "standard" or whatever the store uses)

after the call, referencing the clearCopilotLocalData and copilotLlmModel

symbols.

- Around line 15-26: The beforeEach setup in the test does not reset the

copilotLlmModel, which can cause cross-test state leakage; update the

useCopilotUIStore.setState invocation inside the beforeEach to include

copilotLlmModel: "standard" so the store is fully reset before each test (locate

the beforeEach block and the useCopilotUIStore.setState call in store.test.ts

and add the copilotLlmModel property to the state object).

🪄 Autofix (Beta)

Fix all unresolved CodeRabbit comments on this PR:

- Push a commit to this branch (recommended)

- Create a new PR with the fixes

ℹ️ Review info

⚙️ Run configuration

Configuration used: Organization UI

Review profile: CHILL

Plan: Pro

Run ID: 0789cc68-4622-4b10-a797-133954bb80d2

📒 Files selected for processing (6)

autogpt_platform/backend/backend/copilot/sdk/service.pyautogpt_platform/frontend/src/app/(platform)/copilot/__tests__/store.test.tsautogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/ChatInput.tsxautogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/__tests__/ChatInput.test.tsxautogpt_platform/frontend/src/app/(platform)/copilot/store.tsautogpt_platform/frontend/src/app/(platform)/copilot/useCopilotPage.ts

🚧 Files skipped from review as they are similar to previous changes (3)

- autogpt_platform/frontend/src/app/(platform)/copilot/useCopilotPage.ts

- autogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/ChatInput.tsx

- autogpt_platform/frontend/src/app/(platform)/copilot/store.ts

📜 Review details

⏰ Context from checks skipped due to timeout of 90000ms. You can increase the timeout in your CodeRabbit configuration to a maximum of 15 minutes (900000ms). (7)

- GitHub Check: check API types

- GitHub Check: integration_test

- GitHub Check: end-to-end tests

- GitHub Check: test (3.13)

- GitHub Check: test (3.12)

- GitHub Check: test (3.11)

- GitHub Check: Check PR Status

🧰 Additional context used

📓 Path-based instructions (16)

autogpt_platform/frontend/**/*.{ts,tsx,js,jsx}

📄 CodeRabbit inference engine (.github/copilot-instructions.md)

autogpt_platform/frontend/**/*.{ts,tsx,js,jsx}: Use Node.js 21+ with pnpm package manager for frontend development

Always run 'pnpm format' for formatting and linting code in frontend developmentFormat frontend code using

pnpm format

Files:

autogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/__tests__/ChatInput.test.tsxautogpt_platform/frontend/src/app/(platform)/copilot/__tests__/store.test.ts

autogpt_platform/frontend/**/*.{tsx,ts}

📄 CodeRabbit inference engine (.github/copilot-instructions.md)

autogpt_platform/frontend/**/*.{tsx,ts}: Use function declarations for components and handlers (not arrow functions) in React components

Only use arrow functions for small inline lambdas (map, filter, etc.) in React components

Use PascalCase for component names and camelCase with 'use' prefix for hook names in React

Use Tailwind CSS utilities only for styling in frontend components

Use design system components from 'src/components/' (atoms, molecules, organisms) in frontend development

Never use 'src/components/legacy/' in frontend code

Only use Phosphor Icons (@phosphor-icons/react) for icons in frontend components

Use generated API hooks from '@/app/api/generated/endpoints/' instead of deprecated 'BackendAPI' or 'src/lib/autogpt-server-api/'

Use React Query for server state (via generated hooks) in frontend development

Default to client components ('use client') in Next.js; only use server components for SEO or extreme TTFB needs

Use '' component for rendering errors in frontend UI; use toast notifications for mutation errors; use 'Sentry.captureException()' for manual exceptions

Separate render logic from data/behavior in React components; keep comments minimal (code should be self-documenting)

Files:

autogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/__tests__/ChatInput.test.tsxautogpt_platform/frontend/src/app/(platform)/copilot/__tests__/store.test.ts

autogpt_platform/frontend/**/*.{ts,tsx}

📄 CodeRabbit inference engine (.github/copilot-instructions.md)

autogpt_platform/frontend/**/*.{ts,tsx}: No barrel files or 'index.ts' re-exports in frontend code

Regenerate API hooks with 'pnpm generate:api' after backend OpenAPI spec changes in frontend development

autogpt_platform/frontend/**/*.{ts,tsx}: Fully capitalize acronyms in symbols, e.g.graphID,useBackendAPI

Use function declarations (not arrow functions) for components and handlers

Nodark:Tailwind classes — the design system handles dark mode

Use Next.js<Link>for internal navigation — never raw<a>tags

Noanytypes unless the value genuinely can be anything

No linter suppressors (//@ts-ignore``,// eslint-disable) — fix the actual issue

Keep files under ~200 lines; extract sub-components or hooks into their own files when a file grows beyond this

Keep render functions and hooks under ~50 lines; extract named helpers or sub-components when they grow longer

Use generated API hooks from `@/app/api/generated/endpoints/` with pattern `use{Method}{Version}{OperationName}` and regenerate with `pnpm generate:api`

Do not use `useCallback` or `useMemo` unless asked to optimise a given function

Separate render logic (`.tsx`) from business logic (`use*.ts` hooks)

Use ErrorCard for render errors, toast for mutations, and Sentry for exceptions in the frontend

Files:

autogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/__tests__/ChatInput.test.tsxautogpt_platform/frontend/src/app/(platform)/copilot/__tests__/store.test.ts

autogpt_platform/frontend/src/**/*.{ts,tsx}

📄 CodeRabbit inference engine (AGENTS.md)

autogpt_platform/frontend/src/**/*.{ts,tsx}: Use generated API hooks from@/app/api/__generated__/endpoints/following the patternuse{Method}{Version}{OperationName}, and regenerate withpnpm generate:api

Separate render logic from business logic using component.tsx + useComponent.ts + helpers.ts pattern, colocate state when possible and avoid creating large components, use sub-components in local/componentsfolder

Use function declarations for components and handlers, use arrow functions only for callbacks

Do not useuseCallbackoruseMemounless asked to optimise a given function

Files:

autogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/__tests__/ChatInput.test.tsxautogpt_platform/frontend/src/app/(platform)/copilot/__tests__/store.test.ts

autogpt_platform/frontend/**/*.{tsx,css}

📄 CodeRabbit inference engine (AGENTS.md)

Use Tailwind CSS only for styling, use design tokens, and use Phosphor Icons only

Files:

autogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/__tests__/ChatInput.test.tsx

autogpt_platform/frontend/src/**/*.tsx

📄 CodeRabbit inference engine (AGENTS.md)

Component props should use

interface Props { ... }(not exported) unless the interface needs to be used outside the component

Files:

autogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/__tests__/ChatInput.test.tsx

autogpt_platform/**/*.{ts,tsx}

📄 CodeRabbit inference engine (AGENTS.md)

Never type with

any, if no types available useunknown

Files:

autogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/__tests__/ChatInput.test.tsxautogpt_platform/frontend/src/app/(platform)/copilot/__tests__/store.test.ts

autogpt_platform/frontend/**/*.{test,spec}.{ts,tsx}

📄 CodeRabbit inference engine (AGENTS.md)

autogpt_platform/frontend/**/*.{test,spec}.{ts,tsx}: Use Vitest + RTL + MSW for integration tests as the primary testing approach (~90%, page-level), use Playwright for E2E critical flows, and use Storybook for design system components

Run frontend integration tests withpnpm test:unit(Vitest + RTL + MSW)

Files:

autogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/__tests__/ChatInput.test.tsxautogpt_platform/frontend/src/app/(platform)/copilot/__tests__/store.test.ts

autogpt_platform/frontend/src/app/(platform)/**/components/**/*.tsx

📄 CodeRabbit inference engine (autogpt_platform/frontend/AGENTS.md)

Put sub-components in local

components/folder

Files:

autogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/__tests__/ChatInput.test.tsx

autogpt_platform/frontend/**/*.tsx

📄 CodeRabbit inference engine (autogpt_platform/frontend/AGENTS.md)

autogpt_platform/frontend/**/*.tsx: Component props should betype Props = { ... }(not exported) unless it needs to be used outside the component

Use design system components fromsrc/components/(atoms, molecules, organisms)

Never usesrc/components/__legacy__/*

Tailwind CSS only for styling, use design tokens, Phosphor Icons only

Files:

autogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/__tests__/ChatInput.test.tsx

autogpt_platform/frontend/src/app/(platform)/**/__tests__/**/*.test.{ts,tsx}

📄 CodeRabbit inference engine (autogpt_platform/frontend/AGENTS.md)

Write integration tests in

__tests__/next topage.tsxusing Vitest + RTL + MSW for new pages/features

Files:

autogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/__tests__/ChatInput.test.tsxautogpt_platform/frontend/src/app/(platform)/copilot/__tests__/store.test.ts

autogpt_platform/frontend/src/**/__tests__/**/*.test.{ts,tsx}

📄 CodeRabbit inference engine (autogpt_platform/frontend/AGENTS.md)

Use Orval-generated MSW handlers from

@/app/api/__generated__/endpoints/{tag}/{tag}.msw.tsfor API mocking

Files:

autogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/__tests__/ChatInput.test.tsxautogpt_platform/frontend/src/app/(platform)/copilot/__tests__/store.test.ts

autogpt_platform/frontend/**/*.ts

📄 CodeRabbit inference engine (AGENTS.md)

No barrel files or

index.tsre-exports in the frontendDo not type hook returns, let Typescript infer as much as possible

Files:

autogpt_platform/frontend/src/app/(platform)/copilot/__tests__/store.test.ts

autogpt_platform/frontend/src/**/*.ts

📄 CodeRabbit inference engine (AGENTS.md)

Do not type hook returns, let Typescript infer as much as possible

Extract component logic into custom hooks grouped by concern, not by component. Each hook should represent a cohesive domain of functionality (e.g., useSearch, useFilters, usePagination) rather than bundling all state into one useComponentState hook. Put each hook in its own

.tsfile.

Files:

autogpt_platform/frontend/src/app/(platform)/copilot/__tests__/store.test.ts

autogpt_platform/backend/**/*.py

📄 CodeRabbit inference engine (.github/copilot-instructions.md)

autogpt_platform/backend/**/*.py: Use Python 3.11 (required; managed by Poetry via pyproject.toml) for backend development

Always run 'poetry run format' (Black + isort) before linting in backend development

Always run 'poetry run lint' (ruff) after formatting in backend development

autogpt_platform/backend/**/*.py: Usepoetry run ...command for executing Python package dependencies

Use top-level imports only — avoid local/inner imports except for lazy imports of heavy optional dependencies likeopenpyxl

Use absolute imports withfrom backend.module import ...for cross-package imports; single-dot relative imports are acceptable for sibling modules within the same package; avoid double-dot relative imports

Do not use duck typing — avoidhasattr/getattr/isinstancefor type dispatch; use typed interfaces/unions/protocols instead

Use Pydantic models over dataclass/namedtuple/dict for structured data

Do not use linter suppressors — no# type: ignore,# noqa,# pyright: ignore; fix the type/code instead

Prefer list comprehensions over manual loop-and-append patterns

Use early return with guard clauses first to avoid deep nesting

Use%sfor deferred interpolation indebuglog statements for efficiency; use f-strings elsewhere for readability (e.g.,logger.debug("Processing %s items", count)vslogger.info(f"Processing {count} items"))

Sanitize error paths by usingos.path.basename()in error messages to avoid leaking directory structure

Be aware of TOCTOU (Time-Of-Check-Time-Of-Use) issues — avoid check-then-act patterns for file access and credit charging

Usetransaction=Truefor Redis pipelines to ensure atomicity on multi-step operations

Usemax(0, value)guards for computed values that should never be negative

Keep files under ~300 lines; if a file grows beyond this, split by responsibility (extract helpers, models, or a sub-module into a new file)

Keep functions under ~40 lines; extract named helpers when a function grows longer

...

Files:

autogpt_platform/backend/backend/copilot/sdk/service.py

autogpt_platform/{backend,autogpt_libs}/**/*.py

📄 CodeRabbit inference engine (AGENTS.md)

Format Python code with

poetry run format

Files:

autogpt_platform/backend/backend/copilot/sdk/service.py

🧠 Learnings (32)

📓 Common learnings

Learnt from: Pwuts

Repo: Significant-Gravitas/AutoGPT PR: 12284

File: autogpt_platform/frontend/src/app/api/openapi.json:11897-11900

Timestamp: 2026-03-04T23:58:18.476Z

Learning: Repo: Significant-Gravitas/AutoGPT — PR `#12284`

Backend/frontend OpenAPI codegen convention: In backend/api/features/store/model.py, the StoreSubmission and StoreSubmissionAdminView models define submitted_at: datetime | None, changes_summary: str | None, and instructions: str | None with no default. This is intentional to produce “required but nullable” fields in OpenAPI (properties appear in required[] and use anyOf [type, null]). This matches Prisma’s submittedAt DateTime? and changesSummary String?. Do not flag this as a required/nullable mismatch.

Learnt from: majdyz

Repo: Significant-Gravitas/AutoGPT PR: 12566

File: autogpt_platform/frontend/src/lib/autogpt-server-api/types.ts:968-974

Timestamp: 2026-03-26T00:32:06.673Z

Learning: In Significant-Gravitas/AutoGPT, the admin-facing methods in `autogpt_platform/frontend/src/lib/autogpt-server-api/client.ts` (e.g., `addUserCredits`, `getUsersHistory`, `getUserRateLimit`, `resetUserRateLimit`) intentionally follow the legacy `BackendAPI` pattern with manually defined types in `autogpt_platform/frontend/src/lib/autogpt-server-api/types.ts`. Migrating these admin endpoints to the generated OpenAPI hooks (`@/app/api/__generated__/endpoints/`) is a planned separate effort covering all admin endpoints together, not done piecemeal per PR. Do not flag individual admin type additions in `types.ts` as blocking issues.

Learnt from: majdyz

Repo: Significant-Gravitas/AutoGPT PR: 12440

File: autogpt_platform/backend/backend/copilot/workflow_import/converter.py:0-0

Timestamp: 2026-03-17T10:57:12.953Z

Learning: In Significant-Gravitas/AutoGPT PR `#12440`, `autogpt_platform/backend/backend/copilot/workflow_import/converter.py` was fully rewritten (commit 732960e2d) to no longer make direct LLM/OpenAI API calls. The converter now builds a structured text prompt for AutoPilot/CoPilot instead. There is no `response.choices` access or any direct LLM client usage in this file. Do not flag `response.choices` access or LLM client initialization patterns as issues in this file.

Learnt from: Pwuts

Repo: Significant-Gravitas/AutoGPT PR: 12740

File: autogpt_platform/frontend/src/app/api/openapi.json:0-0

Timestamp: 2026-04-13T14:19:19.341Z

Learning: Repo: Significant-Gravitas/AutoGPT — autogpt_platform

When adding new CoPilot tool response models (e.g., ScheduleListResponse, ScheduleDeletedResponse), update backend/api/features/chat/routes.py to include them in the ToolResponseUnion so the frontend’s autogenerated openapi.json dummy export (/api/chat/schema/tool-responses) exposes them for codegen. Do not hand-edit frontend/src/app/api/openapi.json.

Learnt from: majdyz

Repo: Significant-Gravitas/AutoGPT PR: 12445

File: autogpt_platform/backend/backend/copilot/sdk/service.py:1071-1072

Timestamp: 2026-03-17T06:48:26.471Z

Learning: In Significant-Gravitas/AutoGPT (autogpt_platform), the AI SDK enforces `z.strictObject({type, errorText})` on SSE `StreamError` responses, so additional fields like `retryable: bool` cannot be added to `StreamError` or serialized via `to_sse()`. Instead, retry signaling for transient Anthropic API errors is done via the `COPILOT_RETRYABLE_ERROR_PREFIX` constant prepended to persisted session messages (in `ChatMessage.content`). The frontend detects retryable errors by checking `markerType === "retryable_error"` from `parseSpecialMarkers()` — no SSE schema changes and no string matching on error text. This pattern was established in PR `#12445`, commit 64d82797b.

Learnt from: CR

Repo: Significant-Gravitas/AutoGPT PR: 0

File: classic/original_autogpt/CLAUDE.md:0-0

Timestamp: 2026-04-08T17:27:26.657Z

Learning: LLM model selection can be customized via environment variables: SMART_LLM, FAST_LLM, and TEMPERATURE. These are loaded during config initialization.

Learnt from: CR

Repo: Significant-Gravitas/AutoGPT PR: 0

File: classic/forge/CLAUDE.md:0-0

Timestamp: 2026-04-08T17:27:07.646Z

Learning: Applies to classic/forge/**/forge/agent/**/*.py : Use MultiProvider to route LLM requests to correct provider based on model name (OpenAI, Anthropic, Groq)

Learnt from: majdyz

Repo: Significant-Gravitas/AutoGPT PR: 12623

File: autogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/ChatInput.tsx:172-177

Timestamp: 2026-03-31T14:04:45.723Z

Learning: The Copilot frontend (autogpt_platform/frontend/src/app/(platform)/copilot/) does support dark mode. Dark mode Tailwind CSS variants (dark:*) are appropriate and intentional in copilot UI components to provide proper contrast in both light and dark themes. Do not flag dark: utilities in copilot components as incorrect.

📚 Learning: 2026-04-08T17:28:55.678Z

Learnt from: CR

Repo: Significant-Gravitas/AutoGPT PR: 0

File: autogpt_platform/frontend/src/tests/AGENTS.md:0-0

Timestamp: 2026-04-08T17:28:55.678Z

Learning: Applies to autogpt_platform/frontend/src/tests/src/tests/**/*.spec.ts : Import `test` and `expect` from `./coverage-fixture` instead of `playwright/test` in E2E tests to auto-collect V8 coverage per test for Codecov reporting

Applied to files:

autogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/__tests__/ChatInput.test.tsxautogpt_platform/frontend/src/app/(platform)/copilot/__tests__/store.test.ts

📚 Learning: 2026-04-08T17:28:40.841Z

Learnt from: CR

Repo: Significant-Gravitas/AutoGPT PR: 0

File: autogpt_platform/frontend/AGENTS.md:0-0

Timestamp: 2026-04-08T17:28:40.841Z

Learning: Applies to autogpt_platform/frontend/src/**/__tests__/**/*.test.{ts,tsx} : Use Orval-generated MSW handlers from `@/app/api/__generated__/endpoints/{tag}/{tag}.msw.ts` for API mocking

Applied to files:

autogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/__tests__/ChatInput.test.tsxautogpt_platform/frontend/src/app/(platform)/copilot/__tests__/store.test.ts

📚 Learning: 2026-04-08T17:28:40.841Z

Learnt from: CR

Repo: Significant-Gravitas/AutoGPT PR: 0

File: autogpt_platform/frontend/AGENTS.md:0-0

Timestamp: 2026-04-08T17:28:40.841Z

Learning: Applies to autogpt_platform/frontend/src/app/(platform)/**/__tests__/**/*.test.{ts,tsx} : Write integration tests in `__tests__/` next to `page.tsx` using Vitest + RTL + MSW for new pages/features

Applied to files:

autogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/__tests__/ChatInput.test.tsxautogpt_platform/frontend/src/app/(platform)/copilot/__tests__/store.test.ts

📚 Learning: 2026-04-08T17:28:55.678Z

Learnt from: CR

Repo: Significant-Gravitas/AutoGPT PR: 0

File: autogpt_platform/frontend/src/tests/AGENTS.md:0-0

Timestamp: 2026-04-08T17:28:55.678Z

Learning: Applies to autogpt_platform/frontend/src/tests/**/__tests__/main.test.tsx : Start integration tests at page level with a `main.test.tsx` file before splitting into smaller test files

Applied to files:

autogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/__tests__/ChatInput.test.tsxautogpt_platform/frontend/src/app/(platform)/copilot/__tests__/store.test.ts

📚 Learning: 2026-04-08T17:28:55.678Z

Learnt from: CR

Repo: Significant-Gravitas/AutoGPT PR: 0

File: autogpt_platform/frontend/src/tests/AGENTS.md:0-0

Timestamp: 2026-04-08T17:28:55.678Z

Learning: Applies to autogpt_platform/frontend/src/tests/**/*.test.{ts,tsx} : Place unit tests co-located with the file being tested: `Component.test.tsx` next to `Component.tsx`

Applied to files:

autogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/__tests__/ChatInput.test.tsxautogpt_platform/frontend/src/app/(platform)/copilot/__tests__/store.test.ts

📚 Learning: 2026-04-08T17:27:45.740Z

Learnt from: CR

Repo: Significant-Gravitas/AutoGPT PR: 0

File: AGENTS.md:0-0

Timestamp: 2026-04-08T17:27:45.740Z

Learning: Applies to autogpt_platform/frontend/**/*.{test,spec}.{ts,tsx} : Use Vitest + RTL + MSW for integration tests as the primary testing approach (~90%, page-level), use Playwright for E2E critical flows, and use Storybook for design system components

Applied to files:

autogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/__tests__/ChatInput.test.tsxautogpt_platform/frontend/src/app/(platform)/copilot/__tests__/store.test.ts

📚 Learning: 2026-04-08T17:27:45.740Z

Learnt from: CR

Repo: Significant-Gravitas/AutoGPT PR: 0

File: AGENTS.md:0-0

Timestamp: 2026-04-08T17:27:45.740Z

Learning: Applies to autogpt_platform/frontend/**/*.{test,spec}.{ts,tsx} : Run frontend integration tests with `pnpm test:unit` (Vitest + RTL + MSW)

Applied to files:

autogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/__tests__/ChatInput.test.tsxautogpt_platform/frontend/src/app/(platform)/copilot/__tests__/store.test.ts

📚 Learning: 2026-04-08T17:28:55.678Z

Learnt from: CR

Repo: Significant-Gravitas/AutoGPT PR: 0

File: autogpt_platform/frontend/src/tests/AGENTS.md:0-0

Timestamp: 2026-04-08T17:28:55.678Z

Learning: Applies to autogpt_platform/frontend/src/tests/**/__tests__/**/*.test.{ts,tsx} : Use `findBy...` methods in integration tests instead of `getBy...` methods to avoid flaky tests by waiting for elements to appear asynchronously

Applied to files:

autogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/__tests__/ChatInput.test.tsxautogpt_platform/frontend/src/app/(platform)/copilot/__tests__/store.test.ts

📚 Learning: 2026-04-08T17:27:57.501Z

Learnt from: CR

Repo: Significant-Gravitas/AutoGPT PR: 0

File: autogpt_platform/AGENTS.md:0-0

Timestamp: 2026-04-08T17:27:57.501Z

Learning: Applies to autogpt_platform/frontend/**/*.spec.{ts,tsx} : Create a failing test first using `.fixme` marker (Playwright) when fixing a bug or adding a feature, then implement the fix and remove the fixme marker

Applied to files:

autogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/__tests__/ChatInput.test.tsx

📚 Learning: 2026-04-08T17:28:55.678Z

Learnt from: CR

Repo: Significant-Gravitas/AutoGPT PR: 0

File: autogpt_platform/frontend/src/tests/AGENTS.md:0-0

Timestamp: 2026-04-08T17:28:55.678Z

Learning: Applies to autogpt_platform/frontend/src/tests/**/__tests__/**/*.test.{ts,tsx} : Place integration tests in a `__tests__` folder next to the component being tested

Applied to files:

autogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/__tests__/ChatInput.test.tsxautogpt_platform/frontend/src/app/(platform)/copilot/__tests__/store.test.ts

📚 Learning: 2026-02-27T10:45:49.499Z

Learnt from: majdyz

Repo: Significant-Gravitas/AutoGPT PR: 12213

File: autogpt_platform/frontend/src/app/(platform)/copilot/tools/RunMCPTool/helpers.tsx:23-24

Timestamp: 2026-02-27T10:45:49.499Z

Learning: Prefer using generated OpenAPI types from '@/app/api/__generated__/' for payloads defined in openapi.json (e.g., MCPToolsDiscoveredResponse, MCPToolOutputResponse). Use inline TypeScript interfaces only for payloads that are SSE-stream-only and not exposed via OpenAPI. Apply this pattern to frontend tool components (e.g., RunMCPTool) and related areas where similar SSE/openapi-discrepancies occur; avoid re-implementing types when a generated type is available.

Applied to files:

autogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/__tests__/ChatInput.test.tsx

📚 Learning: 2026-03-24T02:05:04.672Z

Learnt from: majdyz

Repo: Significant-Gravitas/AutoGPT PR: 12526

File: autogpt_platform/frontend/src/app/(platform)/copilot/CopilotPage.tsx:0-0

Timestamp: 2026-03-24T02:05:04.672Z

Learning: When gating React component logic on a React Query result (e.g., hooks like `useQuery` / `useGetV2GetCopilotUsage`), prefer destructuring and checking `isSuccess` (or aliasing it to a meaningful boolean like `isSuccess: hasUsage`) instead of relying on `!isLoading`. Reason: `isLoading` can be `false` in error/idle states where `data` may still be `undefined`, while `isSuccess` indicates the query completed successfully and `data` is populated.

Applied to files:

autogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/__tests__/ChatInput.test.tsx

📚 Learning: 2026-03-24T02:23:31.305Z

Learnt from: majdyz

Repo: Significant-Gravitas/AutoGPT PR: 12526

File: autogpt_platform/frontend/src/app/(platform)/copilot/components/RateLimitResetDialog/RateLimitResetDialog.tsx:0-0

Timestamp: 2026-03-24T02:23:31.305Z

Learning: In the Copilot platform UI code, follow the established Orval hook `onError` error-handling convention: first explicitly detect/handle `ApiError`, then read `error.response?.detail` (if present) as the primary message; if not available, fall back to `error.message`; and finally fall back to a generic string message. This convention should be used for generated Orval hooks even if the custom Orval mutator already maps details into `ApiError.message`, to keep consistency across hooks/components (e.g., `useCronSchedulerDialog.ts`, `useRunGraph.ts`, and rate-limit/reset flows).

Applied to files:

autogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/__tests__/ChatInput.test.tsxautogpt_platform/frontend/src/app/(platform)/copilot/__tests__/store.test.ts

📚 Learning: 2026-03-31T14:04:42.444Z

Learnt from: majdyz

Repo: Significant-Gravitas/AutoGPT PR: 12623

File: autogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/ChatInput.tsx:172-177

Timestamp: 2026-03-31T14:04:42.444Z

Learning: In the Copilot frontend components under autogpt_platform/frontend/src/app/(platform)/copilot/, Tailwind dark mode variants (e.g., `dark:*`) are intentional and should be allowed. Do not flag `dark:` utilities in these Copilot UI components as incorrect; they are used to ensure proper contrast and correct behavior in both light and dark themes.

Applied to files:

autogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/__tests__/ChatInput.test.tsx

📚 Learning: 2026-04-01T18:54:16.035Z

Learnt from: Bentlybro

Repo: Significant-Gravitas/AutoGPT PR: 12633

File: autogpt_platform/frontend/src/app/(platform)/library/components/AgentFilterMenu/AgentFilterMenu.tsx:3-10

Timestamp: 2026-04-01T18:54:16.035Z

Learning: In the frontend, the legacy Select component at `@/components/__legacy__/ui/select` is an intentional, codebase-wide visual-consistency pattern. During code reviews, do not flag or block PRs merely for continuing to use this legacy Select. If a migration to the newer design-system Select is desired, bundle it into a single dedicated cleanup/migration PR that updates all Select usages together (e.g., avoid piecemeal replacements).

Applied to files:

autogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/__tests__/ChatInput.test.tsxautogpt_platform/frontend/src/app/(platform)/copilot/__tests__/store.test.ts

📚 Learning: 2026-04-07T09:24:16.582Z

Learnt from: 0ubbe

Repo: Significant-Gravitas/AutoGPT PR: 12686

File: autogpt_platform/frontend/src/app/(no-navbar)/onboarding/steps/__tests__/PainPointsStep.test.tsx:1-19

Timestamp: 2026-04-07T09:24:16.582Z

Learning: In Significant-Gravitas/AutoGPT’s `autogpt_platform/frontend` (Vite + `vitejs/plugin-react` with the automatic JSX transform), do not flag usages of React types/components (e.g., `React.ReactNode`) in `.ts`/`.tsx` files as missing `React` imports. Since the React namespace is made available by the project’s TS/Vite setup, an explicit `import React from 'react'` or `import type { ReactNode } ...` is not required; only treat it as missing if typechecking (e.g., `pnpm types`) would actually fail.

Applied to files:

autogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/__tests__/ChatInput.test.tsxautogpt_platform/frontend/src/app/(platform)/copilot/__tests__/store.test.ts

📚 Learning: 2026-04-02T05:43:49.128Z

Learnt from: 0ubbe

Repo: Significant-Gravitas/AutoGPT PR: 12640

File: autogpt_platform/frontend/src/app/(no-navbar)/onboarding/steps/WelcomeStep.tsx:13-13

Timestamp: 2026-04-02T05:43:49.128Z

Learning: Do not flag `import { Question } from "phosphor-icons/react"` as an invalid import. `Question` is a valid named export from `phosphor-icons/react` (as reflected in the package’s generated `.d.ts` files and re-exports via `dist/index.d.ts`), so it should be treated as a supported named export during code reviews.

Applied to files:

autogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/__tests__/ChatInput.test.tsxautogpt_platform/frontend/src/app/(platform)/copilot/__tests__/store.test.ts

📚 Learning: 2026-04-13T13:11:07.445Z

Learnt from: 0ubbe

Repo: Significant-Gravitas/AutoGPT PR: 12764

File: autogpt_platform/frontend/src/app/(platform)/library/components/SitrepItem/SitrepItem.tsx:143-145

Timestamp: 2026-04-13T13:11:07.445Z

Learning: In `autogpt_platform/frontend`, do not flag direct interpolation of `executionID` UUID strings into URL query parameters (e.g., `activeItem=${executionID}` in JSX/Next links). If the value is a UUID string matching `[0-9a-f-]`, it contains no reserved URL characters, so additional `encodeURIComponent` or Next.js object-based `href` encoding is unnecessary. Only treat it as an encoding issue if the query-param value is not guaranteed to be UUID-formatted (i.e., may include characters outside `[0-9a-f-]`).

Applied to files:

autogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/__tests__/ChatInput.test.tsx

📚 Learning: 2026-04-08T17:28:55.678Z

Learnt from: CR

Repo: Significant-Gravitas/AutoGPT PR: 0

File: autogpt_platform/frontend/src/tests/AGENTS.md:0-0

Timestamp: 2026-04-08T17:28:55.678Z

Learning: Applies to autogpt_platform/frontend/src/tests/**/*.stories.tsx : Place Storybook tests co-located with components: `Component.stories.tsx` next to `Component.tsx`

Applied to files:

autogpt_platform/frontend/src/app/(platform)/copilot/__tests__/store.test.ts

📚 Learning: 2026-03-17T10:57:12.953Z

Learnt from: majdyz

Repo: Significant-Gravitas/AutoGPT PR: 12440

File: autogpt_platform/backend/backend/copilot/workflow_import/converter.py:0-0

Timestamp: 2026-03-17T10:57:12.953Z

Learning: In Significant-Gravitas/AutoGPT PR `#12440`, `autogpt_platform/backend/backend/copilot/workflow_import/converter.py` was fully rewritten (commit 732960e2d) to no longer make direct LLM/OpenAI API calls. The converter now builds a structured text prompt for AutoPilot/CoPilot instead. There is no `response.choices` access or any direct LLM client usage in this file. Do not flag `response.choices` access or LLM client initialization patterns as issues in this file.

Applied to files:

autogpt_platform/backend/backend/copilot/sdk/service.py

📚 Learning: 2026-04-13T14:19:19.341Z

Learnt from: Pwuts

Repo: Significant-Gravitas/AutoGPT PR: 12740

File: autogpt_platform/frontend/src/app/api/openapi.json:0-0

Timestamp: 2026-04-13T14:19:19.341Z

Learning: Repo: Significant-Gravitas/AutoGPT — autogpt_platform

When adding new CoPilot tool response models (e.g., ScheduleListResponse, ScheduleDeletedResponse), update backend/api/features/chat/routes.py to include them in the ToolResponseUnion so the frontend’s autogenerated openapi.json dummy export (/api/chat/schema/tool-responses) exposes them for codegen. Do not hand-edit frontend/src/app/api/openapi.json.

Applied to files:

autogpt_platform/backend/backend/copilot/sdk/service.py

📚 Learning: 2026-03-09T10:50:43.907Z

Learnt from: Bentlybro

Repo: Significant-Gravitas/AutoGPT PR: 0

File: :0-0

Timestamp: 2026-03-09T10:50:43.907Z

Learning: Repo: Significant-Gravitas/AutoGPT — File: autogpt_platform/backend/backend/blocks/llm.py

For xAI Grok models accessed via OpenRouter, the API returns `null` for `max_completion_tokens`. The convention in this codebase is to use the model's context window size as the `max_output_tokens` value in ModelMetadata. For example, Grok 3 uses 131072 (128k) and Grok 4 uses 262144 (256k). Do not flag these as incorrect max output token values.

Applied to files:

autogpt_platform/backend/backend/copilot/sdk/service.py

📚 Learning: 2026-03-16T17:00:02.827Z

Learnt from: majdyz

Repo: Significant-Gravitas/AutoGPT PR: 12439

File: autogpt_platform/backend/backend/blocks/autogpt_copilot.py:0-0

Timestamp: 2026-03-16T17:00:02.827Z

Learning: In autogpt_platform/backend/backend/blocks/autogpt_copilot.py, the recursion guard uses two module-level ContextVars: `_copilot_recursion_depth` (tracks current nesting depth) and `_copilot_recursion_limit` (stores the chain-wide ceiling). On the first invocation, `_copilot_recursion_limit` is set to `max_recursion_depth`; nested calls use `min(inherited_limit, max_recursion_depth)`, so they can only lower the cap, never raise it. The entry/exit logic is extracted into module-level helper functions. This is the approved pattern for preventing runaway sub-agent recursion in AutogptCopilotBlock (PR `#12439`, commits 348e9f8e2 and 3b70f61b1).

Applied to files:

autogpt_platform/backend/backend/copilot/sdk/service.py

📚 Learning: 2026-03-15T15:30:09.706Z

Learnt from: majdyz

Repo: Significant-Gravitas/AutoGPT PR: 12385

File: autogpt_platform/backend/backend/copilot/tools/helpers.py:149-185

Timestamp: 2026-03-15T15:30:09.706Z

Learning: In autogpt_platform/backend/backend/copilot/tools/helpers.py, within execute_block, when InsufficientBalanceError occurs after post-execution credit charging (concurrent balance drain after pre-check passed), this is treated as a non-fatal billing leak: log at ERROR level with structured JSON fields `{"billing_leak": True, "user_id": ..., "cost": ...}` for monitoring/alerting, then return BlockOutputResponse normally. Discarding the output would worsen UX since the block already executed with potential side effects. Reuse the credit_model obtained during the pre-execution balance check (guarded by `if cost > 0 and credit_model:`) for the post-execution charge; do not perform a second get_user_credit_model call.

Applied to files:

autogpt_platform/backend/backend/copilot/sdk/service.py

📚 Learning: 2026-02-26T17:02:22.448Z

Learnt from: Pwuts

Repo: Significant-Gravitas/AutoGPT PR: 12211

File: .pre-commit-config.yaml:160-179

Timestamp: 2026-02-26T17:02:22.448Z

Learning: Keep the pre-commit hook pattern broad for autogpt_platform/backend to ensure OpenAPI schema changes are captured. Do not narrow to backend/api/ alone, since the generated schema depends on Pydantic models across multiple directories (backend/data/, backend/blocks/, backend/copilot/, backend/integrations/, backend/util/). Narrowing could miss schema changes and cause frontend type desynchronization.

Applied to files:

autogpt_platform/backend/backend/copilot/sdk/service.py

📚 Learning: 2026-03-04T08:04:35.881Z

Learnt from: majdyz

Repo: Significant-Gravitas/AutoGPT PR: 12273

File: autogpt_platform/backend/backend/copilot/tools/workspace_files.py:216-220

Timestamp: 2026-03-04T08:04:35.881Z

Learning: In the AutoGPT Copilot backend, ensure that SVG images are not treated as vision image types by excluding 'image/svg+xml' from INLINEABLE_MIME_TYPES and MULTIMODAL_TYPES in tool_adapter.py; the Claude API supports PNG, JPEG, GIF, and WebP for vision. SVGs (XML text) should be handled via the text path instead, not the vision path.

Applied to files:

autogpt_platform/backend/backend/copilot/sdk/service.py

📚 Learning: 2026-04-01T04:17:41.600Z

Learnt from: majdyz

Repo: Significant-Gravitas/AutoGPT PR: 12632

File: autogpt_platform/backend/backend/copilot/tools/workspace_files.py:0-0

Timestamp: 2026-04-01T04:17:41.600Z

Learning: When reviewing AutoGPT Copilot tool implementations, accept that `readOnlyHint=True` (provided via `ToolAnnotations`) may be applied unconditionally to *all* tools—even tools that have side effects (e.g., `bash_exec`, `write_workspace_file`, or other write/save operations). Do **not** flag these tools for having `readOnlyHint=True`; this is intentional to enable fully-parallel dispatch by the Anthropic SDK/CLI and has been E2E validated. Only flag `readOnlyHint` issues if they conflict with the established `ToolAnnotations` behavior (e.g., missing/incorrect propagation relative to the intended annotation mechanism).

Applied to files:

autogpt_platform/backend/backend/copilot/sdk/service.py

📚 Learning: 2026-03-05T15:42:08.207Z

Learnt from: ntindle

Repo: Significant-Gravitas/AutoGPT PR: 12297

File: .claude/skills/backend-check/SKILL.md:14-16

Timestamp: 2026-03-05T15:42:08.207Z

Learning: In Python files under autogpt_platform/backend (recursively), rely on poetry run format to perform formatting (Black + isort) and linting (ruff). Do not run poetry run lint as a separate step after poetry run format, since format already includes linting checks.

Applied to files:

autogpt_platform/backend/backend/copilot/sdk/service.py

📚 Learning: 2026-03-16T16:35:40.236Z

Learnt from: majdyz

Repo: Significant-Gravitas/AutoGPT PR: 12440

File: autogpt_platform/backend/backend/api/features/workflow_import.py:54-63

Timestamp: 2026-03-16T16:35:40.236Z

Learning: Avoid using the word 'competitor' in public-facing identifiers and text. Use neutral naming for API paths, model names, function names, and UI text. Examples: rename 'CompetitorFormat' to 'SourcePlatform', 'convert_competitor_workflow' to 'convert_workflow', '/competitor-workflow' to '/workflow'. Apply this guideline to files under autogpt_platform/backend and autogpt_platform/frontend.

Applied to files:

autogpt_platform/backend/backend/copilot/sdk/service.py

📚 Learning: 2026-03-31T15:37:38.626Z

Learnt from: majdyz

Repo: Significant-Gravitas/AutoGPT PR: 12623

File: autogpt_platform/backend/backend/copilot/tools/agent_generator/fixer.py:37-47

Timestamp: 2026-03-31T15:37:38.626Z

Learning: When validating/constructing Anthropic API model IDs in Significant-Gravitas/AutoGPT, allow the hyphen-separated Claude Opus 4.6 model ID `claude-opus-4-6` (it corresponds to `LlmModel.CLAUDE_4_6_OPUS` in `autogpt_platform/backend/backend/blocks/llm.py`). Do NOT require the dot-separated form in Anthropic contexts. Only OpenRouter routing variants should use the dot separator (e.g., `anthropic/claude-opus-4.6`); `claude-opus-4-6` should be treated as correct when passed to Anthropic, and flagged only if it’s used in the OpenRouter path where the dot form is expected.

Applied to files:

autogpt_platform/backend/backend/copilot/sdk/service.py

📚 Learning: 2026-04-15T02:43:36.890Z

Learnt from: ntindle

Repo: Significant-Gravitas/AutoGPT PR: 12780

File: autogpt_platform/backend/backend/copilot/tools/workspace_files.py:0-0

Timestamp: 2026-04-15T02:43:36.890Z

Learning: When reviewing Python exception handlers, do not flag `isinstance(e, X)` checks as dead/unreachable if the caught exception `X` is a subclass of the exception type being handled. For example, if `X` (e.g., `VirusScanError`) inherits from `ValueError` (directly or via an intermediate class) and it can be raised within an `except ValueError:` block, then `isinstance(e, X)` inside that handler is reachable and should not be treated as dead code.

Applied to files:

autogpt_platform/backend/backend/copilot/sdk/service.py

🔇 Additional comments (5)

autogpt_platform/frontend/src/app/(platform)/copilot/__tests__/store.test.ts (1)

157-180:copilotChatModerename coverage looks correct.The assertions and setter calls now match the updated store API and correctly preserve the non-persistence expectation for chat mode.

autogpt_platform/frontend/src/app/(platform)/copilot/components/ChatInput/__tests__/ChatInput.test.tsx (2)

11-20: Store mock update is aligned with the new API surface.Switching to

copilotChatMode/setCopilotChatModeand includingcopilotLlmModelprevents drift from the updated Zustand store contract.

141-155: Toggle behavior assertions are correctly updated.The click-path expectations now validate the renamed setter and the expected mode transitions.

autogpt_platform/backend/backend/copilot/sdk/service.py (2)

682-701: Nice precedence order for override resolution.Env override → LaunchDarkly → default is a clean sequence here, and the string guard keeps the untyped flag API from feeding non-string values into model resolution.

2180-2194: Good call keeping the request contract tier-based.Accepting

CopilotLlmModelhere avoids leaking provider-specific model IDs into callers and keeps the transport contract stable if the backend mapping changes later.

…ant, UnboundLocalError guard, test coverage - Extract _OPUS_COST_MULTIPLIER to module level alongside _MAX_STREAM_ATTEMPTS / _EMPTY_TOOL_CALL_LIMIT - Extract _resolve_model_and_multiplier() helper to consolidate LD + request-tier model resolution - Initialize sdk_model and model_cost_multiplier before the outer try block to prevent UnboundLocalError in finally when sdk_cwd setup returns early - Add copilotLlmModel: "standard" to beforeEach store reset to prevent cross-test state leakage - Assert copilotLlmModel resets to "standard" in clearCopilotLocalData test

…t subscription mode

🧪 E2E Test ReportE2E Test Report: PR #12786 — feat(copilot): standard/advanced model toggleDate: 2026-04-15 Environment

Bugs Found & FixedBug 1:

|

…handler Add describe block for ChatInput model toggle covering: - render/hide based on feature flag - standard→advanced and advanced→standard toggle calls - hide when streaming - toast notifications for both toggle directions Also update store mock to use reactive mockCopilotLlmModel variable and reset it in afterEach for test isolation.

Drop Flag.COPILOT_MODEL, env_flag_string_override, _resolve_user_model_override and all associated tests — the UI toggle makes per-user LD targeting unnecessary.

Wire up Key.COPILOT_MODE the same way as Key.COPILOT_MODEL — read on init, write on change, clear on clearCopilotLocalData.

|

This pull request has conflicts with the base branch, please resolve those so we can evaluate the pull request. |

- Keep both TestNormalizeModelName (model toggle) and TestTokenUsageNullSafety (merged from #12789) in service_helpers_test.py - Add dedicated copilotLlmModel describe block: defaults, setter, localStorage persistence - Assert clearCopilotLocalData also clears copilot-model localStorage key

…into feat/copilot-model-targeting-flag

|

Conflicts have been resolved! 🎉 A maintainer will review the pull request shortly. |

…resetModules The copilotChatMode and copilotLlmModel store fields are initialised from localStorage via IIFEs that run once at module-load time. The existing tests reset state with setState() which bypasses the initialiser, leaving the 'fast' and 'advanced' branches uncovered for codecov patch. Use vi.resetModules() + dynamic import to get a fresh store instance with pre-populated localStorage, covering both initialiser branches.

Why

Users have different task complexity needs. Sonnet is fast and cheap for most queries; Opus is more capable for hard reasoning tasks. Exposing this as a simple toggle gives users control without requiring infrastructure complexity.

Opus costs 5× more than Sonnet per Anthropic pricing ($15/$75 vs $3/$15 per M tokens). Rather than adding a separate entitlement gate, the rate-limit multiplier (5×) ensures Opus turns deplete the daily/weekly quota proportionally faster — users self-limit via their existing budget.

What

CopilotLlmModel = Literal["standard", "advanced"]— model-agnostic tier names (not tied to Anthropic model names)"advanced"→claude-opus-4-6,"standard"→config.model(currently Sonnet)PlatformCostLogorcost_usd— those use real API-reported valuesKey.COPILOT_MODELso preference survives page refreshclaude_agent_max_budget_usdreduced from $15 to $10How

Backend

CopilotLlmModeltype added toconfig.py, imported in routes/executor/servicestream_chat_completion_sdkacceptsmodel: CopilotLlmModel | None_normalize_model_namestrips the OpenRouter provider prefixmodel_cost_multiplier(1.0 or 5.0) computed from final resolved model name, passed topersist_and_record_usage→record_token_usage(Redis only)Frontend

ModelToggleButtoncomponent: sky-blue, star icon, "Advanced" label when activecopilotModelstate inuseCopilotUIStorewith localStorage hydrationcopilotModelRefpattern inuseCopilotStream(avoids recreatingDefaultChatTransport)showModeToggle && !isStreaminginChatInputChecklist